Question 1

1.a.

A Markov chain is a stochastic process where the future state depends only on the current state, not on the past states.1

Let

- : discount level of policyholder after years

- where: - State 0: no discount (0%) - State 1: 15% discount - State 2: 25% discount - State 3: 40% discount

- representing years

represents the premium discount status at the end of year

The Markov property holds because next year’s discount depends only on the current year’s discount and whether a claim was made.

1.b.

From Rangkuman:

- Transition probability is the probability of transitioning from state to state in one time step.

- Transition matrix contains all in matrix form.

Given:

Then:

- From state 0 (no discount): with no claim → state 1; with claim → state 0

- From state 1 (15%): with no claim → state 2; with claim → state 0

- From state 2 (25%): with no claim → state 3; with claim → state 1

- From state 3 (40%): with no claim → state 3; with claim → state 2

Transition Matrix:

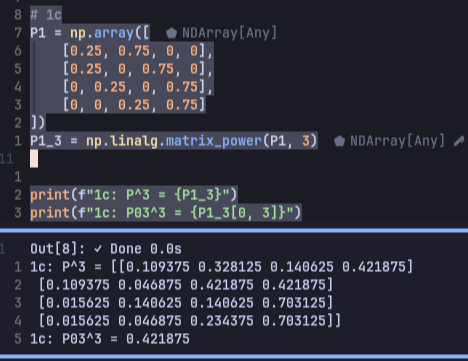

1.c.

We need:

From Chapman-Kolmogorov:

Using Python to compute :

1.d.

We need:

This is a one-step transition from state 3 to state 2.

represents the probability of transitioning from state to state in one time step.1

From the transition matrix in 1.b.:

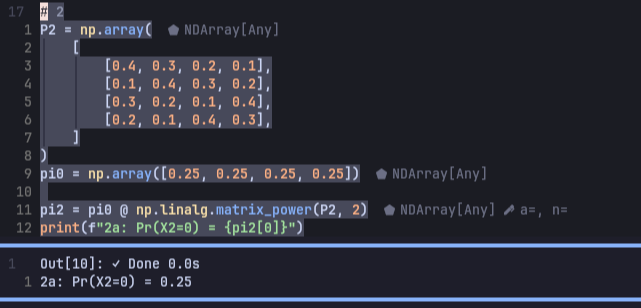

Question 2

Given:

- State space:

- Transition matrix:

- Initial distribution:

2.a.

From Chapman-Kolmogorov:

- The distribution at time is:

We need the probability of being in state 0 at time 2 ()

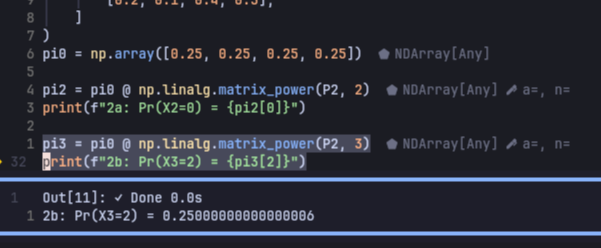

2.b.

We need the probability of being in state 2 at time 3 ()

Question 3

Given transition matrix:

We need to classify which states are transient and which are recurrent. (See Recurrent vs Transient)

By definition:

State is accessible from state if for some .

- Two states communicate if they are mutually accessible.

- Communication satisfies: reflexive, symmetric, transitive (equivalence relation).

- States that communicate form a class.

- Classes are either identical or disjoint.

- A chain is irreducible if there is only one class.

First Step Analysis:

- From state 0: Can go to states 0, 1, 2

- From state 1: Can go to states 0, 1, 2

- From state 2: Can go to states 3, 4, 5

- From state 3: Can go to states 0, 1, 2, 5

- From state 4: Can go to state 2

- From state 5: Can only go to state 5 (absorbing)

So:

- {0, 1}:

- 0 → 1 (via )

- 1 → 0 (via )

- {2, 3, 4}:

- 2 → 3 → 4 → 2 forms a cycle

- {5}:

- State 5 is absorbing ()

- It’s a singleton class.

Therefore:

- Transient states: 0, 1, 2, 3, 4

- Recurrent states: 5

Question 4

Given:

- Demand distribution: , , , ,

- Policy: with and

So:

- If inventory after demand ≤ s (= 1), order up to S (= 4)

- If inventory after demand > s (= 1), no order

4.a.

= Probability of transitioning from state 4 to state 1.

Analysis

- Demand = 0 → Inventory = 4 → Since 4 > s = 1, no order → Next state = 4

- Demand = 1 → Inventory = 3 → Since 3 > 1, no order → Next state = 3

- Demand = 2 → Inventory = 2 → Since 2 > 1, no order → Next state = 2

- Demand = 3 → Inventory = 1 → Since 1 ≤ s, order to 4 → Order 3 units → Next state = 4

- Demand = 4 → Inventory = 0 → Since 0 ≤ s, order to 4 → Order 4 units → Next state = 4

So:

- From state 4, the next states possible are: 4, 3, 2 (all > 1)

- There is no scenario where the next state = 1

4.b.

= Probability of transitioning from state 0 to state 4.

Analysis

- Demand = 0 → Inventory = 0 → Since 0 ≤ s = 1, order to S = 4 → Order 4 units → Next state = 4

- Demand = 1 → Inventory = 0 → Since 0 ≤ s, order to 4 → Next state = 4

- Demand = 2 → Inventory = 0 → Since 0 ≤ s, order to 4 → Next state = 4

- Demand = 3 → Inventory = 0 → Since 0 ≤ s, order to 4 → Next state = 4

- Demand = 4 → Inventory = 0 → Since 0 ≤ s, order to 4 → Next state = 4

All scenarios lead to state 4. So,